摘要: How are various organizations handling the accelerating transition of data to the cloud? What are the obstacles in data cleaning for analytics and the time constraints companies face when preparing data for analytics, AI and Machine Learning (ML) initiatives? Here is a look at some insights from a recent report by Trifacta that answer these questions.

Data has increasingly become a critical component of just about every aspect of business and the amount of data is skyrocketing. In fact, 90% of the world’s data has been created in the last two years and it’s expected that by 2020, 463 exabytes of data will be created every day from wearables, social media networks, communications (business and consumer), transactions and connected devices. While the explosion in the volume — and more importantly, diversity of data — is instrumental in supporting the future of Artificial Intelligence (AI) and accelerates the automation of data analysis, it’s also creating the obstacles that enterprises currently face in their adoption of AI. Most believe there is great potential to gain efficiencies and improve data-driven decision-making, but as their use cases continue to increase, there is still much room for improvement to remove the obstacles to adoption. A recent report by Trifacta reveals how these challenges are inhibiting the overall success of these projects and the ability to improve efficiencies when working with data to accelerate decision making. Here is a look:

DATA INACCURACY IS INHIBITING AI PROJECTS

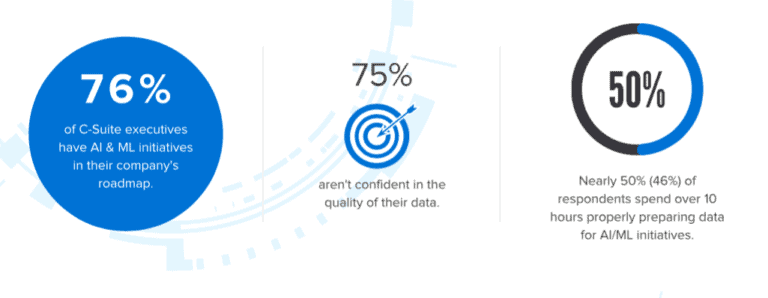

The time-consuming nature of data preparation is a detriment to organizations: Data Scientists are spending too much time preparing data and not enough time analyzing it. Almost half (46%) of respondents reportedly spend over 10-hours properly preparing data for analytics and AI/ML initiative while others spend upwards of 40-hours on data preparation processes alone on a weekly basis. Although data preparation is a time-consuming, inefficient process, it’s absolutely vital to the success of every analytics project. Some of the leading implications of data inaccuracy result from miscalculating demand (59%) and targeting the wrong prospects (26%). Decisions made from data would improve if organizations were able to incorporate a broader set of data into their analysis, such as unstructured third-party data from customers, semi-structured data or data from relational databases.

C-SUITE HAS TAKEN NOTICE

Simply put, if the quality of data is bad, analytics and AI/ML initiatives are going to be worthless. While 60% of C-suite respondents state that their company frequently leverages data analysis to drive future business decisions, 75% aren’t confident in the quality of their data. About one-third state poor data quality caused analytics and AI/ML projects to take longer (38%), cost more (36%) or fail to achieve the anticipated results (33%). With 71% of organizations relying on data analysis to drive future business decisions, these inefficiencies are draining resources and inhibiting the ability to glean insights that are crucial to overall business growth.

RISE OF AI AND ML PUSH CLOUD ADOPTION

The benefits of the cloud are hard to overestimate in particular as it relates to the ability to quickly scale analytics and AI/ML initiatives, which presents a challenge for today’s siloed data cleansing processes. There are many reasons for widespread cloud migration with 66% of respondents stating that all or most of their analytics and AI/ML initiatives are running in the cloud, 69% of respondents reporting their organization’s use of cloud infrastructure for data management, and 68% of IT pros using the cloud to store more or all of their data — a trend that’s only going to grow. In two years from now, 88% of IT professionals estimate that all or most of their data will be stored in the cloud.

“The growth of cloud computing is fundamental to the future of AI, analytics, and Machine Learning initiatives. Unfortunately, the pace and scale at which this growth is happening underscore the need for coordinated data preparation, as data quality remains one of the largest obstacles in every organization’s quest to modernize their analytics processes in the cloud.”

- Adam Wilson, CEO, Trifacta.

......

詳見全文Full Text: Dataconomy

若喜歡本文,請關注我們的臉書 Please Like our Facebook Page: Big Data In Finance

留下你的回應

以訪客張貼回應